A large window frame manufacturing company in the CIS reached out to us because their call center quality assessment was drowning in manual work. The team wanted to stop relying on random sampling and subjective evaluations and start making data-driven decisions about operator performance and customer satisfaction.

Challenge

Before automation, call center quality assessment was predominantly manual. Out of 33,000 monthly calls, only a tiny fraction could be evaluated.

- The Chief Digital Officer and the Call Center Director occasionally checked randomly selected call recordings to evaluate operator efficiency. The criteria were response time (3-5 seconds) and conversation productivity, based on subjective evaluation of the listener.

- The manual approach made it nearly impossible to identify inefficient operators, track workload distribution or spot bottlenecks in call center operations.

- There was no scalable way to present performance data to the C-suite. The only measure of effectiveness was what two managers had heard in their limited random sampling.

The unsatisfactory outcome was that the company had inadequate assessment mechanisms and no visibility into critical operational issues like operator burnout, customer dissatisfaction patterns or conflict situations.

Solution

We were excited to automate such an important process for the client. The first step was to set up specific objectives:

- reduce manual labor for listening to and evaluating calls

- ensure objective assessment of operator and manager performance quality

- obtain structured data about call objectives and outcomes

- automatically identify problem areas (customer dissatisfaction, operator burnout, conflicts)

- visualize data in clear dashboards

The tech core of the project is built with these 5 elements:

- n8n for process orchestration (self-hosted)

- Supabase for data recording and retention

- Deepgram for automatic speech recognition via API, with ability to catch company-specific key terms (e.g. ‘anti-burglary hardware’ or ‘soundproofing’)

- OpenAI GPT-4o for text analysis and sentiment recognition

- Lovable for frontend dashboards with dynamic filters (operators, departments, brands)

The automation works in clear steps:

- Call transcription. Deepgram converts audio recordings to text with high accuracy, preserving technical terminology specific to the window manufacturing business.

- Sentiment analysis and classification. GPT-4o analyzes conversations, classifies clients according to NPS categories (Detractor, Passive, or Promoter) and identifies potential conflicts.

- Performance scoring. The system evaluates each call against structured criteria like following call stages (greeting, need identification, problem solving, closing) and quality control (operator behavior, conflict prevention skills).

- Summary generation. For every call, the system presents a summary and assessment conclusion with specific scores and improvement recommendations.

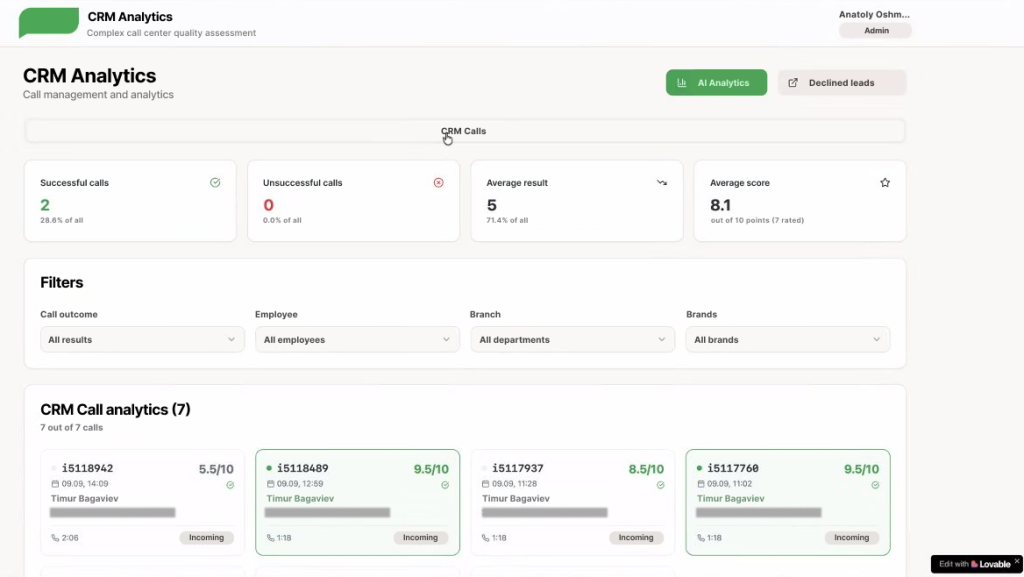

- Dashboard visualization. All data flows into dynamic dashboards where managers can filter by operators, departments, brands, and performance metrics to spot trends and issues quickly.

The solution is hooked directly to the CRM, so every call is automatically processed without manual intervention.

Result

More than 7,000 calls have been analysed so far, but even this amount helped the team gain unprecedented visibility into call center operations.

- Time savings are dramatic. AI analysis takes 2-3 minutes per call — compare that with 10 minutes of manual evaluation. This enables regular assessment rounds instead of occasional random sampling.

- Objective multi-factor scoring system replaced subjective evaluation. Calls scoring over 8/10 are considered successful, and anything below the score triggers management support for that operator.

- Cross-department insights emerged. An important outcome was that the findings, now representative and valid, can inform the company’s sales and marketing teams. In particular, the sales team can adjust their messaging based on what callers talk about during their calls.

Learnings

We feel like it’s not stressed enough today among AI automators but — AI delivers value when grounded in real operational needs.

- Company-wide enthusiasm matters. The C-suite ran an all-company AI training and invited team leads to propose hands-on AI projects for their departments. This top-down support combined with bottom-up initiative drove successful adoption.

- Realistic expectations prevent disappointment. The team can’t yet tie AI performance to the bottom line, but they see clear operational improvements. They approach AI as a practical tool, not a silver bullet.

- Future scaling is now possible. The company plans to extend AI automation to employee training: building a chatbot to search through massive documentation and creating compressed training modules with auto-generated test questions.

In this project, AI was perceived as a leverage to scaling important business processes. It eliminated manual assessment work and lets managers focus on coaching, strategic improvements, and addressing real performance issues with data-backed insights.